AI Risks Are Growing, but Global Insurers Say They Won't Cover the Damage [AI's Backlash]

- Input

- 2026-04-28 18:41:32

- Updated

- 2026-04-28 18:41:32

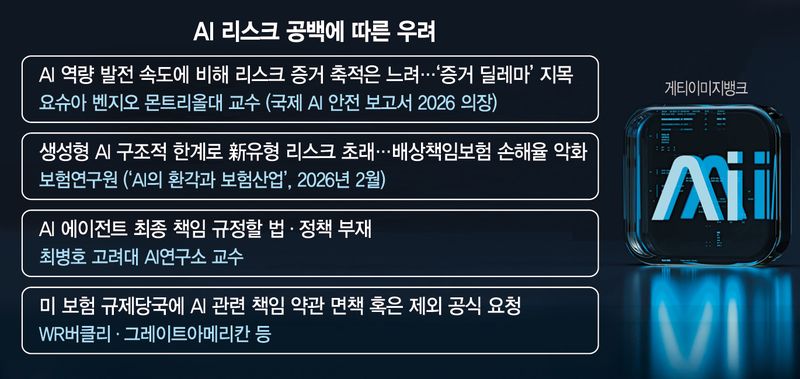

AI technology is advancing rapidly, but laws and institutions remain stuck in the old framework, deepening concerns over a liability gap. The Framework Act on the Development of Artificial Intelligence (AI Basic Act) enacted in South Korea includes only provisions to prevent misuse of AI or assign limited responsibility, and it is unclear whether it can be applied to related industries such as insurance. The same is true overseas, where major insurers are urging financial authorities to establish exemption clauses for AI-related accidents.

■ Insurance is also avoiding risk

According to industry sources on the 28th, global insurers have asked authorities to exempt them from liability for AI-related accidents. At the same time, data analytics firms that analyze or support insurers' data are also indirectly suggesting that insurers need exemption clauses for AI incidents. Verisk, an insurance data analytics company, is reportedly including AI exemption clauses in its standard policy terms. Even in insurance, which sits at the front line of risk assessment, concerns are growing that AI risks cannot be covered in the traditional way.

In the International AI Safety Report 2026, which included Yoshua Bengio, the so-called 'godfather of AI' and a professor at the University of Montreal, the authors identified two key challenges: an 'evaluation gap,' in which AI systems show failures in real-world settings that were not predicted in pre-deployment tests, and an 'evidence dilemma,' in which AI capabilities advance quickly while evidence of risk accumulates slowly. This is the same context behind global insurers' reluctance to underwrite AI risks.

■ Who is responsible for errors such as hallucinations and bias?

Domestic industry players share similar concerns. A report titled 'AI Hallucinations and the Insurance Industry,' released in February by the Korea Insurance Research Institute, noted that "the use of AI has become an essential condition for strengthening corporate management and national competitiveness, but the structural limitations of generative AI are creating new types of risks never experienced before, including hallucinations, bias and infringement."

Their biggest concern is that it is not easy to determine who is responsible when an accident is triggered by an AI error. AI produces results through calculations involving billions of parameters, but it has a 'black box' structure that makes it difficult to explain the internal path leading to a specific decision. In the event of an autonomous vehicle crash or an AI medical misdiagnosis, a traditional system could identify causes such as sensor failure, code bugs or driver negligence. With AI, that is far more difficult. Even if the same accident occurs, it is virtually impossible to trace why the system made that judgment. If the cause cannot be identified, neither negligence nor liability can be established.

■ The system is also in a vacuum... "There is still a long way to go"

The institutional framework is also in a vacuum. The so-called Framework Act on the Development of Artificial Intelligence, which took effect this year, was designed mainly around preemptive obligations for businesses, such as ensuring transparency and safety. However, it does not specifically define who a victim can seek compensation from after an AI accident, or the scope of responsibility borne by developers, companies or cloud providers. Unlike the EU Artificial Intelligence Act, which includes detailed rules for specific cases, South Korea's Framework Act on the Development of Artificial Intelligence is seen as remaining at a declarative level, merely stating that AI should be used safely. The government is reportedly working on related items, but no concrete details have yet been presented.

Byung-Ho Choi, a professor at the Korea University AI Research Institute, said, "Even if authority is delegated to AI agents, the final responsibility ultimately lies with humans." He added, "We need laws and policies that define that responsibility, but compared with immediate threats such as Mythos, regulatory discussions are still at an early stage, so there is still a long way to go."

He continued, "AI at the level of Mythos is only the beginning, and more will keep emerging." He stressed, "As performance becomes more advanced, risks will grow as well, so it is urgent to establish a national response system that covers the entire AI infrastructure, from energy systems to security."

yjjoe@fnnews.com Jo Yoon-ju Reporter