Inference Becomes More Complex, Driving Operating Costs Higher... The More AI Is Used, the More Profits Decline [AI's Backlash]

- Input

- 2026-04-27 18:29:32

- Updated

- 2026-04-27 18:29:32

Although token prices for artificial intelligence (AI) models are falling, the so-called 'token inflation' phenomenon, in which total operating costs soar, is intensifying. This is because advances in AI agents and inference functions have driven token consumption per task up by tens of times. As the global AI industry faces overload in its computing infrastructure and moves to overhaul existing pricing systems, analysts say companies are seeing a growing burden on their actual operations.

■Costs fall, but usage surges

According to the IT industry on the 27th, Gartner, a global market research firm, recently forecast in a report that AI inference costs will fall by more than 90% by 2030 from current levels. Based on large language models with more than 1 trillion parameters, the firm said cost efficiency could improve by as much as 100 times compared with 2022. The gains are attributed to better semiconductor performance, more efficient model architectures, and the widespread adoption of inference chips.

However, even if the unit cost of individual computations falls thanks to technological progress, the overall AI operating costs felt by companies and individuals are expected to rise. In fact, global Big Tech firms are continuing cutthroat competition and steadily lowering token prices. The main driver of higher operating costs is the explosive increase in usage. As AI has evolved from simple question-and-answer tools into AI agents that carry out tasks on their own through complex inference, token usage per task has jumped by at least five times and as much as 30 times compared with before. Massive computing demand is more than offsetting the drop in token prices, pushing total costs higher as well. Gartner noted that "actual token prices are falling rapidly, but usage is rising even faster," adding that "even if basic functions become cheaper, infrastructure for high-performance AI remains a limited resource and will continue to put pressure on costs."

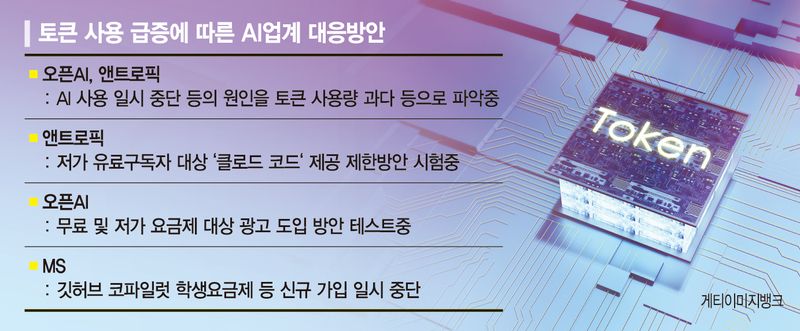

Recent service disruptions at major generative AI platforms such as OpenAI's ChatGPT and Anthropic's Claude are being interpreted as signs that computing demand has reached its limits, rather than simply reflecting temporary traffic spikes.

■Will Anthropic and others change pricing policies?

As infrastructure costs soar, AI companies are also revising their pricing policies.

With a uniform unlimited flat-rate model now seen as virtually impossible to sustain, companies are rapidly shifting to usage-based pricing systems. Microsoft (MS) has temporarily suspended new sign-ups for plans such as its student offering, while Anthropic, facing a sharp rise in computing demand, is testing restrictions on access to Claude Code for lower-priced paid subscribers amid accusations that it deliberately reduced model performance.

OpenAI launched a monthly $8 plan called ChatGPT Go earlier this year and is testing the introduction of ads for users on free and low-cost plans.

As AI pricing shifts toward a pay-more-the-more-you-use model, corporate cost burdens are expected to rise exponentially. Byung-Ho Choi, professor at Korea University Human-Inspired AI Research Institute (HIAI), said, "Token costs inevitably surge as agents develop," adding, "For companies, this is a time to think seriously about ways and methods to use tokens more economically while maintaining performance."

wongood@fnnews.com Joon-Woo Reporter