Ultra-Powerful AI ‘Mythos’ Triggers Global Cybersecurity and Financial Alarm

- Input

- 2026-04-13 18:22:03

- Updated

- 2026-04-13 18:22:03

The unveiling of Claude Mythos by Anthropic has effectively demonstrated that AI-driven cyber threats to financial systems and national infrastructure are no longer just a potential risk factor, but a real and present danger. As a result, more experts in Korea are calling on the government and financial regulators to quickly establish concrete response frameworks for AI-enabled cyber threats.

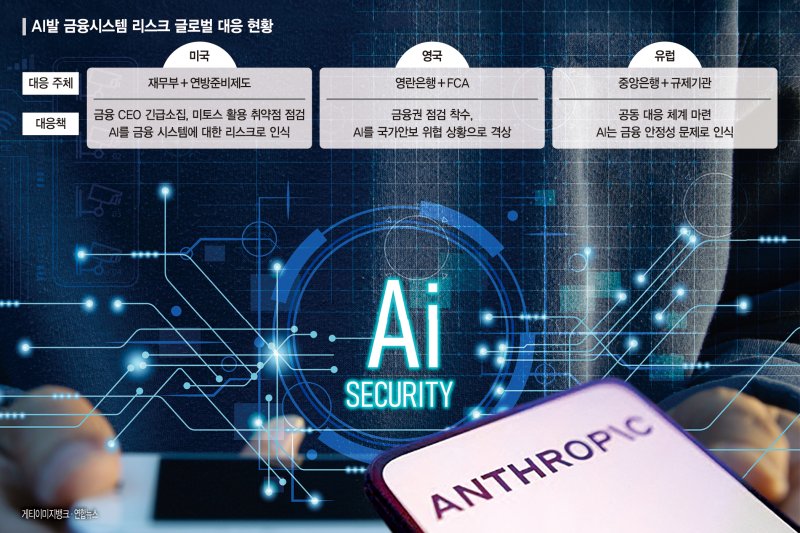

■ U.S. and U.K. elevate AI to a financial stability risk

According to reporting by Financial News on the 13th, Anthropic released its high-performance AI model Claude Mythos on the 7th (local time). Immediately afterward, U.S. Treasury Secretary Scott Bessent and the Federal Reserve System (the Fed) convened an emergency conference call with the chief executives of major banks, including Goldman Sachs, Citibank, Bank of America and Morgan Stanley, to review their readiness for AI-based cyber risks. The call effectively served as a status check on how well major financial systems can defend against AI-driven attacks. During the meeting, the U.S. administration also reportedly urged bank CEOs to use Claude Mythos to strengthen the security of their banking systems.

In other words, even as officials warned that AI could become a powerful weapon for attacking financial systems, they also encouraged the use of AI to probe and reinforce those same systems. Analysts say this shows how the emergence of a powerful AI model has placed the U.S. government in a dilemma.

The United Kingdom has taken similar steps. The Bank of England (BoE), the Financial Conduct Authority (FCA) and the National Cyber Security Centre (NCSC) have jointly launched an emergency risk assessment targeting major financial institutions. Across Europe as well, central banks and regulators are moving in tandem, clearly reclassifying AI from a conventional “digital risk” to a system-wide risk directly tied to financial stability.

In Korea, the Financial Supervisory Service (FSS) on the 13th urgently convened information security and cybersecurity practitioners from major banks and other financial institutions for a review meeting on AI-driven security threats.

Claude Mythos was released as part of Project Glasswing, a joint security initiative that Anthropic operates together with big tech companies such as Google, Amazon and Microsoft. During testing, Anthropic reported that the model had discovered thousands of high-risk vulnerabilities across all major operating systems and web browsers worldwide. Anthropic stated, “Claude Mythos is the most powerful AI model we have developed so far,” and explained that, because it is exceptionally capable at detecting software security flaws and could be misused for hacking, the company plans to keep it closed and not release it to the general public.

■ AI-driven cyber threats could expose weaknesses in financial systems

Observers say the first and strongest reactions from governments, regulators and financial institutions after the release of Claude Mythos stem from the structural and regulatory characteristics of the financial sector.

Major U.S. banks already classify cyberattacks as a form of system risk and are subject to regular stress tests and supervision by the Treasury Department and the Fed. Banks operate core infrastructure that is connected in real time—such as payment, clearing and funds transfer systems—so a single point of weakness can quickly spread across the entire network. The global financial system is also tightly interconnected through the Society for Worldwide Interbank Financial Telecommunication (SWIFT), credit card networks and the IT systems of large banks, meaning a disruption at one node can trigger cascading transaction failures. In addition, because financial institutions directly handle customer assets and market confidence, any security incident can rapidly escalate into a liquidity crisis or bank run. For these reasons, authorities in the U.S. and U.K. appear to have immediately elevated AI’s potential cyberattack capabilities from a mere IT issue to a core financial stability concern.

■ Korea’s AI policy, focused mainly on utilization, needs to be reset

Korean financial regulators and security agencies are still approaching AI more from the perspective of maximizing its use than managing its risks, according to domestic experts. They note that current AI policy in Korea is centered on enabling everyone in the country to use AI conveniently and on fostering AI companies by providing GPU resources, with the government taking the lead.

Financial authorities such as the Financial Services Commission (FSC) and the FSS have also issued guidelines and internal control standards for AI use in the financial sector. However, these focus mainly on data utilization, consumer protection and algorithmic transparency.

A concrete response framework that treats cyberattacks as a source of system-wide risk has yet to be developed. The Ministry of Science and ICT and the National Intelligence Service (NIS) both stress the importance of AI security, but integrated response systems and stress tests directly linked to financial infrastructure have not yet taken shape. The Bank of Korea (BOK) likewise has not formally addressed AI-based cyber risks as a core agenda item from a financial stability standpoint.

In this context, some experts argue that Korea should benchmark the background and response measures behind the rapid actions taken by U.S. and U.K. governments and financial regulators after the release of Claude Mythos. They also say it is time to fundamentally reconsider the focus of Korea’s AI policy.

As global AI competition shifts toward infrastructure capabilities—such as AI data centers, power supply and security competence—Korea’s AI strategy also needs to be adjusted, they contend.

A security expert who requested anonymity said, “Now that AI can both detect vulnerabilities and automate attacks, AI-driven security policy must be given higher priority than AI utilization strategies in overall AI policy and in the financial sector’s AI roadmaps.” The expert added, “Korea urgently needs to overhaul its AI policy for finance, including building AI-based attack scenarios targeting financial institutions, introducing cyber risk stress tests, and establishing an integrated response framework that links financial regulators, the central bank and intelligence agencies.”

cafe9@fnnews.com Lee Gu-soon Reporter