"Why did my Samsung and Hynix suddenly drop?" A small ball Google lobbed [AI Atlas]

- Input

- 2026-03-29 10:00:00

- Updated

- 2026-03-29 10:00:00

With that single blow, Korean chip bellwethers such as Samsung Electronics and SK hynix tumbled last week, and U. S. memory stocks also went into a steep slide.

" In practical terms, it shows that you can compress the data used by an LLM to a reasonable degree and later decompress it with almost no loss in accuracy. When an LLM carries on a long conversation in a single session, it already performs its own compression to save memory. TurboQuant, as described in Google’s paper, is a technique that compresses far more efficiently than current methods while keeping accuracy largely intact.

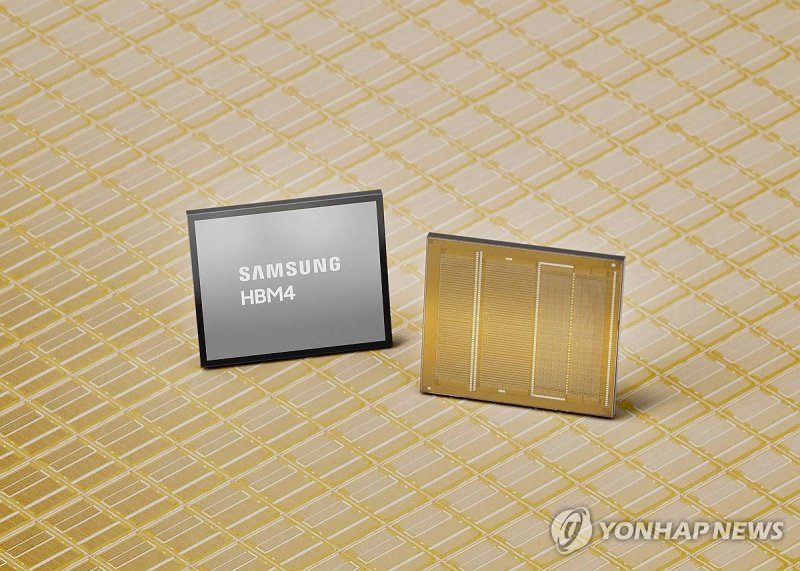

Google logo. Photo by Newsis News Agency / AP. "So we can get by with less memory?" In the AI hardware market, where High Bandwidth Memory (HBM) is as crucial as the Graphics Processing Unit (GPU), the publication of this paper had an immediate impact.With that single blow, Korean chip bellwethers such as Samsung Electronics and SK hynix tumbled last week, and U. S. memory stocks also went into a steep slide.

In practice, Google is highly likely to start validating TurboQuant by applying it directly to products such as its Google Gemini LLM and various DeepMind AI services. A direct hit to semiconductor and storage stocks Market reaction right after Google’s announcement was brutal.

4%. Storage specialists SanDisk (SNDK) and Western Digital (WDC) plunged about 11% and 7%, respectively.With that single blow, Korean chip bellwethers such as Samsung Electronics and SK hynix tumbled last week, and U. S. memory stocks also went into a steep slide.

With that single blow, Korean chip bellwethers such as Samsung Electronics and SK hynix tumbled last week, and U. S. memory stocks also went into a steep slide.

Semiconductor materials, parts and equipment stocks likewise fell across the board on concerns about a slowdown in the industry cycle. Samsung Electronics' HBM4 high-bandwidth memory (HBM4).

Photo by Yonhap News Agency. Will the 'Jevons paradox' apply this time too? A heated debate is unfolding in the market.With that single blow, Korean chip bellwethers such as Samsung Electronics and SK hynix tumbled last week, and U. S. memory stocks also went into a steep slide.

Just as OpenAI’s ChatGPT was shaped by earlier research on the attention mechanism, TurboQuant is highly likely to be adopted by the AI industry in the near term. Because AI companies must constantly upgrade their hardware, they have every incentive to implement a technology that can dramatically improve memory efficiency as quickly as possible.

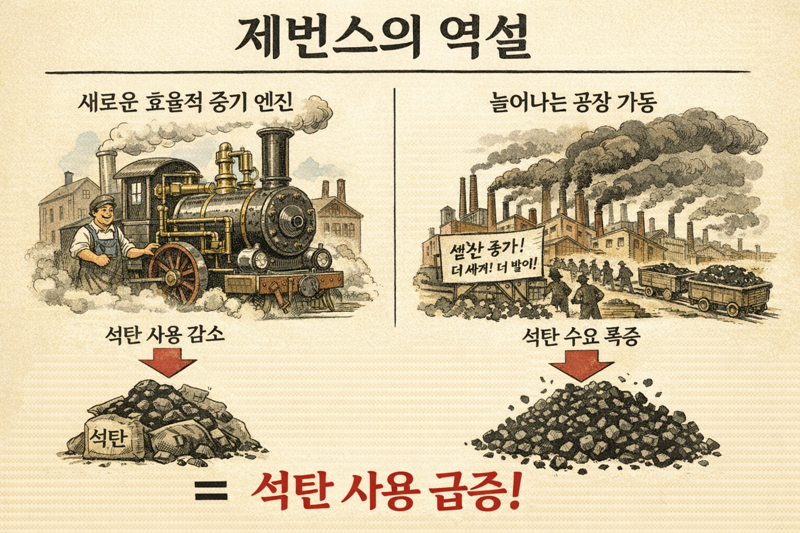

An illustration of the Jevons paradox generated with ChatGPT. The argument that memory demand will surge over the long term also deserves attention.This is where the economic concept known as the "Jevons paradox" comes in. It describes how improvements in the efficiency of resource use can actually increase total consumption of that resource.

With that single blow, Korean chip bellwethers such as Samsung Electronics and SK hynix tumbled last week, and U. S. memory stocks also went into a steep slide.

Applied to AI memory, the logic is this: as TurboQuant lowers the cost of running AI, companies will roll out more AI services, which in turn will drive much larger overall demand for memory. Per-device memory capacity may become more efficient, but the number of devices and services using AI will grow exponentially.

As a result, analysts argue, the total addressable market for semiconductors could end up far bigger than it is today.

ksh@fnnews.com Kim Sung-hwan Reporter