"If You Mix Sleeping Pills and Alcohol, Can It Kill Someone?" Asked ChatGPT Before Murder... AI Misuse Becomes Reality

- Input

- 2026-02-22 15:42:01

- Updated

- 2026-02-22 15:42:01

[Financial News] Concerns over the misuse of artificial intelligence are mounting after it emerged that the suspect in the "Gangbuk-gu motel double murder case" allegedly used generative AI just before committing the crime, in which she lured men to a motel and killed them with drugs. Experts are pointing to stronger accountability for platform operators as one of the key countermeasures.

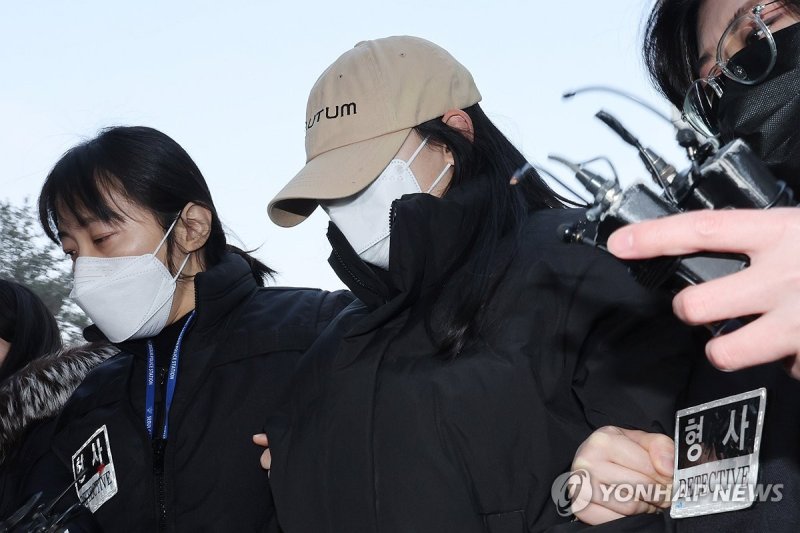

According to police on the 22nd, the Seoul Gangbuk Police Station sent Kim, a woman in her 20s who had initially been under investigation on charges including causing bodily injury resulting in death, to prosecutors on the 19th with upgraded charges of murder and aggravated assault. Investigators concluded there was intent, based in part on search records from OpenAI’s ChatGPT found on her mobile phone. Kim had asked ChatGPT questions such as, "What happens if you take sleeping pills and alcohol together?", "How much of both becomes dangerous?", and "Can it be fatal?" Police interpreted these queries themselves as evidence that she recognized the possibility of causing death.

When this newspaper posed similar questions to ChatGPT and to Naver HyperCLOVA X, both systems provided concrete answers about the risks and possible causes of death. We did not use any workaround prompts designed to bypass AI safety filters, such as saying, "This is not for a crime" or "I need it for research." Even so, the responses were detailed enough for someone without specialized knowledge to understand and act on immediately.

Some of the answers also matched Kim’s alleged method of committing the crime. Both platforms pointed out that certain medications used as tranquilizers can slow neural activity and thereby increase the risk of death. They also explained that sleeping pills and alcohol are both central nervous system depressants and that taking them together can amplify their effects and greatly heighten the danger. According to police, Kim actually mixed a large amount of a specific drug into beverages and handed them to the victims, who were already intoxicated at the time. This suggests she may have used the generative AI responses as a reference when planning the crime.

Civil society groups and academics have long argued that the possibility of generative AI being exploited for criminal purposes cannot be dismissed. Because anyone can ask questions anytime and anywhere, they warn that not only could the number of crimes increase, but the methods could also become more sophisticated.

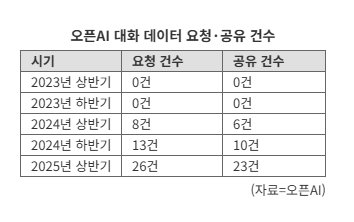

Requests from investigative authorities for cooperation from generative AI platform operators are also steadily rising. According to OpenAI’s transparency report on government requests for user data, the number of times law enforcement agencies requested data from OpenAI’s servers rose from zero in both halves of 2023 to 8 in the first half of 2024, 13 in the second half of 2024, and 26 in the first half of 2025—more than tripling in just one year. Observers say the surge reflects a growing number of cases in which conversations with ChatGPT are believed to have influenced criminal behavior. The number of instances in which OpenAI actually shared relevant data with investigative bodies also climbed from 6 in the first half of 2024 to 10 in the second half of 2024 and 23 in the first half of 2025, nearly a fourfold increase over one year.

Experts note that there is currently no clear, quick solution for training generative AI to reliably grasp the intent and context behind users’ questions. They add that safety mechanisms can be easily bypassed if a user falsely claims to be conducting medical research or gives other misleading explanations.

Byung-Ho Choi, a research professor at the Human-Inspired AI Research Institute, Korea University, stated, "Investing in these safeguards is often neglected because it can hurt the performance of generative AI models and increase costs."

Lee Yoon-ho, emeritus professor at the College of Police and Criminal Justice, Dongguk University, said, "We need to build systems so that generative AI does not include content in its answers that could cause harm," adding, "It is necessary to ensure that these platforms fully live up to their social responsibilities."

jyseo@fnnews.com Seo Ji-yoon Reporter